Social media giant Instagram has signalled a new focus on the wellbeing of its users, with a number of new, artificial intelligence-focused features on the way.

Australia will be the second country in the world where Instagram will trial hiding the number of likes on a photo from other users in an effort to reduce stress and pressure, while the company will also be using artificial intelligence to try to stamp out bullying from the popular photo and video-sharing platform.

As part of worldwide trials, Instagram users in Australia won’t be able to see how many likes a photo or video has on the platform, and will instead see “others like this” on the post.

Users will still be able to see how many likes their own photo has.

It’s the second country to have the feature trialled after Canada in May, and Mia Garlick, Director of Public Policy for Instagram in Australia and New Zealand, said it’s about taking the competition out of social networking.

“We know that people come to Instagram to express themselves and to be creative and follow their passions, and we want to make sure it’s not a competition,” Garlick told Hack.

“We want to make sure that people are not feeling like they should like a particular post because it’s getting a lot of likes, and that they shouldn’t feel like they’re sharing solely to get likes.

“We want to see if this actually improves the experience and depressurises Instagram.”

It follows a number of studies that have found that the social media platform can have a negative impact on the mental health of young people, including a Pew Research Centre study that found more than a third of teenagers felt “pressure” to post content they thought would get a lot of likes.

The trial is part of Instagram’s new focus on the wellbeing of its users, with a number of new features in the works.

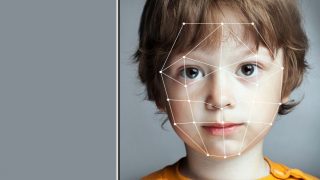

These include an anti-bullying tool that will warn users if they are about to comment something deemed to be bullying, using artificial intelligence technology.

If the classifier detects “borderline content”, it will encourage the user to rethink the wording of the comment.

“The idea is to give you a little nudge and say, ‘hey, this might be offensive’, without stopping you from posting,” Instagram wellbeing designer Francesco Fogu told Time.

In a wide-reaching interview with Time, Instagram boss Adam Mosseri said he was willing to introduce new features that could lead to users spending less time on the platform, if it meant putting their wellbeing first.

“We will make decisions that mean people user Instagram less if it keeps people more safe,” Mosseri told Time.

“It would hurt our reputation and our brand over time. It would make our partnership relationships more difficult. There are all sorts of ways it would strain us. If you’re not addressing issues on your platform, I have to believe it’s going to come around and have a real cost.”

Mosseri, who took over the top job in October, said combating cyber bullying on Instagram is his “top priority”.

The company will also soon be rolling out the “Restrict” feature, which will allow users to block someone without the other user even knowing.

The user will then be able to view any comments from that account before they are made public, and can approve, delete or leave them pending.

The Instagram head revealed that the company has three separate bullying classifiers scanning the platform’s content, one for text, photos and videos.

The technology is currently live and flags content every hour, but is in its “pretty early days”.

Instagram’s current working definition of bullying is any content that is intended to “harass or shame an individual”, including insults, shaming, identity attacks, disrespect, unwanted contact and betrayals.

The company is also checking all of its products before launch and considering whether they could be “weaponised”.

.jpg)