Legal bodies have torn into the government’s crackdown on “abhorrent” social media content, warning of “negative unintended consequences”.

Having last week passed both the Senate and House of Representatives within a day and without amendment, the Criminal Code Amendment (Sharing of Abhorrent Violent Material) Bill 2019 was a direct response to last month’s mass shooting in Christchurch, New Zealand.

Some 50 people were killed and nearly as many injured by a lone gunman who live-streamed his rampage on Facebook but went undetected for more than 17 minutes.

The new legislation introduces two new offences, threatening executives with up to 3 years’ imprisonment and fines of up to 10 percent of the company’s revenue if they don’t “remove abhorrent violent material expeditiously”.

The legislation also requires social media companies anywhere in the world to notify the Australian Federal Police if they become aware they are broadcasting such conduct that is happening in Australia.

While its goals are understandable, the government’s rapid action came “without any meaningful consultation”, Digital Industry Group managing director Sunita Bose said in the wake of the passage of the legislation, and “does nothing to address hate speech, which was the fundamental motivation for the tragic Christchurch attacks.”

The consultation process should have included “the technology industry, legal experts, the media and civil society”, Bose added, “but that didn’t happen this week.”

High-profile technology figures were up in arms about the passage of the legislation, with Atlassian co-founder and CEO Scott Farquhar launching a Twitter tirade in which he excoriated the “flawed” new legislation, which “will unnecessarily cost jobs and damage our tech industry.”

Anyone working for a company that allows user-generated content – including news sites, social media sites, dating sites, and job sites – “could potentially go to jail for 3 years,” Farquhar said while slamming a government that, he said, was caught in a “blind rush to legislate”.

Atlassian co-CEO Scott Farquhar's Twitter tirade

This rush had fostered a lack of definitions around terms such as “expeditiously”, or even to whom the legislation would apply, he complained – left untenable grey areas that meant employees at such companies were “guilty until proven innocent”.

“Never has petty party politics so clearly been on display,” Farquhar wrote. “The ALP agreed [the legislation] was flawed but supported it anyway…. They need to violate users privacy to police this.”

Industry analyst Paul Budde also wasn’t mincing words, calling the social-media legislation “nothing less than crazy, totally unrealistic and plain stupid – quickly cobbled together to get a political election advantage.”

“The long-term implications… have not been thought through and will have a disastrous effect on the industry without delivering the outcomes the government aims to achieve.”

Pass it now, change it later

Government-imposed expectations on technology companies have already been in the spotlight given the Coalition government’s approach in pushing through the Telecommunications and Other Legislation Amendment (Assistance and Access) Bill 2018 – the enabling legislation for an encryption-bypassing scheme that has drawn world attention and concern from an industry that sees its commercial prospects being marginalised as a result.

Former prime minister Malcolm Turnbull garnered worldwide attention in 2017, when he countered arguments that strong encryption algorithms were mathematically impossible to access with a statement that “the laws of mathematics are very commendable, but the only law that applies in Australia is the law of Australia.”

The law was ultimately fast-tracked through Parliament, but after widespread criticism it is being reviewed by the Parliamentary Joint Committee on Intelligence and Security in a process that will accept submissions through July 1 and run through April 2020.

Similar concerns have been raised around the passage of the Sharing of Abhorrent Violent Material Bill, which went from draft to law in a matter of days.

Liberal Democrats senator Duncan Spender, for one, argued that the lack of consultation had produced imperfect legislation that passed “sight unseen” and was not properly introduced to the Senate.

He challenged the President of the Senate about why he “did not intervene in, or even comment on,” the process.

Spender also noted that a “flawed definition of torture” could ban “consensual acts of control and violence” such as sado-masochist websites and warned that the legislation had not been applied to conventional broadcasters.

Dealing with the challenge of scale

Just as some believe the passage and implications of the act are problematic, there is widespread divergence of opinion about just what social media companies can reasonably be expected to do.

Many questions have been raised about how the video and its derivatives made it past Facebook monitors who claim to be on constant lookout for potentially violent and objectionable material.

Facebook last year said it had doubled the size of its safety and security team to 20,000 people – including 7,500 content reviewers spread across more than 20 countries, enforcing the site’s policies around objectionable content.

Those policies include a promise to remove content “that glorifies violence or celebrates the suffering or humiliation of others”.

In the wake of the Christchurch attacks, Facebook announced that it would be utilising technological and human filters to find and remove white nationalist and white separatist content on Facebook and Instagram.

Scale remains a major issue in filtering online video – with users adding more than 6 hours of video content every second to YouTube alone.

Popular videos are regularly re-recorded and re-uploaded to avoid takedown efforts, as happened with the Christchurch video.

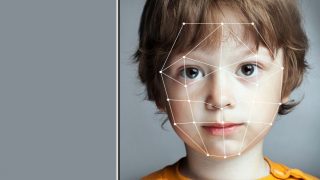

No company can hire enough people to patrol this volume of video for objectionable content manually, and the algorithms that drive artificial intelligence filters often struggle to discern intent or implied motives behind videos.

Due to the way it was filmed, the Christchurch shooter’s video, for example, would have looked to an AI algorithm like any of hundreds of thousands of first-person shooter videogame recordings that are freely posted by gamers and available online.

When videos are recorded, edited and re-uploaded to avoid being taken offline, it becomes impossible to keep up.

“We need to be sensible when working on these offences,” the LCA’s Moses said, “and not demand of social media companies what they cannot reasonably be expected to do.”

Implications for business and media

Activist group Digital Rights Watch warned that the new laws would create “a new class of Internet censorship”, with chair Tim Singleton Norton calling the law “a tremendous mistake” and arguing that “it’s simply wrong to assume that an amendment to the criminal code is going to solve the wider issue of content moderation on the Internet.”

“If Australian officials seek to ram through half-cooked fixes past Parliament without the proper expert advice and public scrutiny, the result is likely to be a law that undermines human rights.”

Law Council of Australia (LCA) president Arthur Moses warned that the passage of the legislation without “proper consultation” could fuel “negative unintended consequences” – such as hampering reporters’ and whistleblowers’ ability to report on atrocities committed around the world, or driving “unacceptable” broader media censorship.

“We now have a situation where important news can be censored across social media platforms,” he said.

Moses was also concerned about the long-term implications for confidence in Australia’s business environment.

Threatening executives of companies with jail time, and pegging potential fines against companies’ revenues rather than the seriousness of their breach, “would be bad for certainty and bad for business,” he said, warning of a potential “chilling effect” on overseas investment in Australia.

Business organisation the Australian Industry Group (Ai Group) urged the government to take a “prudent pause” before continuing to rush through legislation without proper consultation.

“Rushing through a bill without proper consultation is never a good idea and diminishes trust in our Parliamentary system,” Ai Group chief executive Innes Willox said, citing the example of the controversy around the Assistance and Access Bill.

“Industry and community concerns were not addressed and despite promises of subsequent amendment, remain unresolved to this day.”

“Creation of new offences, regulation of media and extraterritorial laws raise legitimate questions that cannot be answered in a day or two…. Rushing this legislation through will not make Australia safe.”

.jpg)