Meta has announced a new commitment to underrage privacy and safety, partially funding a platform which enables kids and teens to prevent nude or sexual content from surfacing online.

Social networking giant Meta, the company behind Facebook and Instagram, announced it was teaming up with Take It Down – a new platform operated by US child protection organisation National Centre for Missing and Exploited Children (NCMEC).

The Take it Down tool, which will be available to millions of Australian users, helps youth remove sexually explicit content of themselves from the web and social media, and currently functions across five participating platforms: Facebook, Instagram, Yubo, OnlyFans and Pornhub.

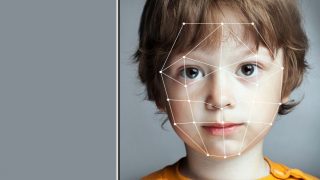

The site allows users to anonymously create a "digital fingerprint" or "hash" of a chosen lewd image, which is then fed into a database which participating tech companies reference to scrub flagged images from their services.

The service can function without the image actually being uploaded, and also works with deepfakes, which is when someone's face is digitally imposed onto an existing photo or video, typically for pornography or deceptive content.

Take it Down announced the initiative in a post outlining the company's stance against inappropriate image-sharing and sextortion.

"Having a personal intimate image shared with others can be scary and overwhelming, especially for young people," said Meta.

"It can feel even worse when someone tries to use those images as a threat for additional images, sexual contact or money — a crime known as sextortion.

"Meta doesn’t allow content or behaviour that exploits young people, including the posting of intimate images or sextortion activities."

Currently, the service is confined to the platforms who've agreed to participate – meaning other platforms such as the Meta-owned messaging platform WhatsApp, for now, will not remove flagged images sent through their services.

Should Meta do more?

Meta's announcement arrives after years of outside pressure for social media companies to step up against child exploitation.

In the past, Meta-owned Facebook had already worked with Australian government agencies such as The Office of the eSafety Commissioner to enact a user-reporting measure against unsolicited posting and sharing of intimate images.

However, Australian experts still suggest social media giants like Meta could take more of a proactive approach to the issue.

"The service relies on user-reporting, rather than the companies proactively detecting image-based abuse or known child sexual exploitation and abuse material," Australia's eSafety Commissioner Julie Inman Grant told the ABC.

"We maintain the view that companies need to be doing much more in this area.

"Image-based abuse has become the scarlet letter of the digital age … once these go up on the internet, it can be very insidious and be difficult to take down, particularly if you're trying to work on your own."

Despite the criticism, Meta Australia's head of public policy Josh Machin lauded the tool as a world-first for young Australians.

"This world-first platform will offer support to young Australians to prevent the unwanted spread of their intimate images online, which we know can be extremely distressing for victims," he said."

Gavin Portnoy, a spokesperson for the NCMEC, further explained the scope of Take It Down was geared more-so at those actively seeking to anonymously remove an image from the web.

"Take It Down is made specifically for people who have an image that they have reason to believe is already out on the web somewhere, or that it could be,” said Portnoy.

"You’re a teen and you’re dating someone and you share the image. Or somebody extorted you and they said, ‘If you don’t give me an image, or another image of you, I’m going to do xyz.’

"To a teen who doesn’t want that level of involvement, they just want to know that it’s taken down, this is a big deal for them," he added.

In 2021, NCMEC's CyberTipline service received nearly 30 million reports of suspected sexual exploitation of children online – a majority of which were reported by platforms owned by Meta.

The same year saw Meta support the launch of a similar platform titled StopNCII, which addresses the increasingly common issue of "revenge porn" on social media platforms by helping adults to stop the spread of intimate images.

Meta concluded its Take It Down announcement by affirming it has developed more than 30 tools "to support the safety of teens and families" across its apps, including supervision tools and age-verification technology.

The new platform is open to anyone under the age of 18, and can be used by parents or trusted adults on behalf of a young person.