A new advisory body will develop laws for regulating high-risk artificial intelligence (AI) systems but leave low-risk AI untouched, Industry and Science Minister Ed Husic has revealed days before launching the government’s response to its responsible AI inquiry.

That inquiry, which published a discussion paper last June and received 510 submissions before closing in August, is guiding the government’s protracted development of policy and +legislative guardrails to ensure that runaway development of AI – and, in particular, generative AI (genAI) – doesn’t run roughshod over human safety, work prospects, or legal protections.

Nearly all of the submissions – from industry, academia, and tech giants – called for action on “preventing, mitigating and responding to” AI’s harms, Husic told The Age as he finalises the government’s strategy to “ensure that AI works in the way it is intended, and that people have trust in the technology.”

Trust in AI was flagged by the likes of the Australian Federal Police, which acknowledged “that we must hold ourselves to a higher standard than those of our adversaries and be cautious in the use of broader private industry offerings”.

Aiming to normalise such caution, the coming advisory body could either follow the EU’s lead by bundling AI protections into a single AI Act or, recognising that most AI usage is safe, amend existing harm-focused laws to formally address behaviour by AIs.

For now, Husic suggested the government favours the latter approach – echoing earlier advice from the Law Council of Australia, which recommended a dedicated taskforce spend 18 months outlining legislative reform unless earlier action was indicated “where there are identified existing problems and there is evidence that existing laws and regulations are insufficient to address these problems”.

That included “clearly egregious” AI applications such as deepfakes, exploitation of vulnerable groups, and social scoring systems – all of which, ASPI said, are explicitly addressed in the EU’s AI Act but inadequately dealt with under Australia’s existing laws.

The government’s philosophy could, however, change as global AI regulations and technology continue to develop: “If there is a potential impact on the safety of people’s lives or their future prospects, then we will act,” Husic said.

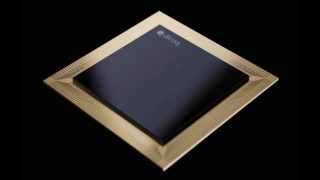

Human by design: AI’s new imperative

The government’s response, and the subsequent recommendations of the expert advisory panel, will set the agenda for AI regulation during 2024 – a process that Husic has previously described as “a balancing act the whole world is grappling with” as increasingly capable AI systems enhance the capabilities of humanoid robots, alter educational curricula, create new forms of harassment, speed scientific discovery, critically review and revise human-derived mathematical algorithms, and reshape modern warfare.

This “seismic shift in the way people work, live and learn” will require innovators to consider four key forms of engagement as they develop new AI-driven technologies, Accenture recommended in a new report advising that “enterprises that prepare now will win in the future”.

Delivering a ‘human by design’ AI future requires addressing four key areas, Accenture noted – including reshaping people’s relationship with knowledge as AI curates and personalises our access to data, and embracing autonomous, AI-powered agents to “not only assist and advise us, but also take decisive actions on our behalf”.

Human-focused interfaces will also create rich, immersive new worlds built around spatial computing, metaverse, digital twin and AR/VR technology, while using new human interfaces such as AI-powered wearables, brain-sensing neurotechnology, and eye and movement tracking.

With AI “starting to reason like us” and driving a “new spatial computing medium” in which machines “are getting much better at interacting with humans on their level”, Accenture believes genAI alone could impact 44 per cent of all working hours in the US, boost productivity across 900 different types of jobs, and create $12 trillion ($US8 trillion) in global economic value.

Yet as Husic and similar-minded analysts have warned, unfettered AI adoption risks unplanned consequences – leading the Australian Strategic Policy Institute (ASPI) to recommend that the government explicitly recognise AI’s dual role for civilian and military applications and regularly update its definition of AI to reflect new technological developments.

Last November, Australia joined 27 countries and the EU in signing the Bletchley Declaration – encapsulating a common desire that AI should be designed and deployed in a “safe, human-centric, trustworthy and responsible” way.

At that time, Husic – who in December launched a $17 million government investment to build up to five specialised AI Adopt centres to help businesses embrace AI – said it was crucial for Australia and other governments to “act now to make sure safety and ethics are in-built [and] not a bolt-on feature down the track.”

.jpg)