Meta is unleashing AI that scans users’ bodies — from face shape to height — in an aggressive bid to root out underage accounts on Facebook and Instagram.

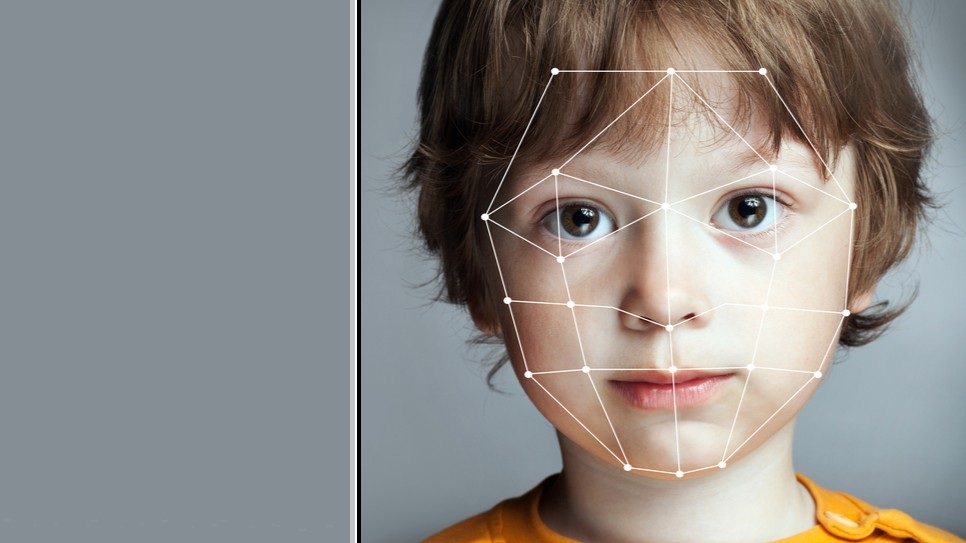

The company announced Tuesday it was developing “advanced AI” that includes the use of visual analysis for detecting underage accounts.

This new visual analysis technique will enable Meta’s AI to scan photos and videos for “visual clues” about a user’s age – including one’s height and bone structure.

These visual insights will be combined with Meta’s analysis of text and interactions in a bid to significantly increase the number of underage accounts it can spot and remove.

Meta said it was also adopting AI-powered textual analysis to analyse entire profiles for contextual clues – such as birthday celebrations or mentions of school grades – across posts, comments, profile bios, captions, and in-app features such as Instagram Reels, Instagram Live, and Facebook Groups.

“If we determine an account may be underage, it will be deactivated and the account holder will need to verify their age to continue using their account,” a Meta spokesperson said.

Australian rollout coming

Following a testing period in the US, Meta has planned to globally expand its visual analysis technology to under-13s over the coming months.

A spokesperson confirmed to Information Age that an Australian rollout for under-16s will follow, bolstering Meta’s compliance with the country’s social media minimum age (SMMA) laws.

“In Australia, we are committed to complying with the social media ban and are extending these advanced detection tools to under-16s in the coming weeks and months,” said Antigone Davis, vice president and global head of safety at Meta.

As of January, Meta had blocked more than 544,000 under-16s from its platforms in Australia.

Further to removing accounts for underage users, Meta said it would also expand an existing initiative to automatically administer ‘Teen Account’ protections for under-18s.

Not facial recognition

Although a Meta spokesperson confirmed the company will scan photos and videos for “height or face size”, among other visual clues, Meta’s blog post emphasised the new AI technique is “not facial recognition”.

Meta seemed to distinguish the face-scanning technology from facial recognition by clarifying that “it does not identify the specific person in the image”.

Lisa Given, professor of Information Sciences at RMIT, said although “facial recognition does raise privacy concerns for people”, it was accurate to say the new techniques were not facial recognition.

“Facial recognition requires an existing photo, such as a driver’s licence, to compare against and demonstrate that the person in front of the camera is ‘recognised’ as the same person.

“However, facial scanning technology just looks at the person’s facial features, without archiving or storing a photo.”

She added that Meta’s new approach “comes with the same error rates and challenges in accurately assessing age” as those already highlighted by companies trying to comply with Australia’s social media ban.

“Generally, these processes have a one-to-three year error rate, particularly when trying to estimate the age of young children accurately,” said Given.

AI to review underage dob-ins

Meta has also “made it easier” to report underage accounts in-app and via its help centre, a spokesperson explained.

The company has also supplemented such reports with “AI-driven reviews” that it believes will “deliver higher accuracy and faster resolutions than human review alone”.

The company also introduced “strengthened measures” to spot and crack down on underage users who attempt to return to its platforms after their accounts are deactivated, while parents in the US will soon receive notifications about how to check and confirm their teens’ ages on Instagram and Facebook.

Meta ended its announcement by advocating for age verification at the app store and operating system level.

“While we believe these tools make a real difference, we continue to believe the most effective approach to age assurance is verification at the app store level giving parents a single, consistent place to manage their children's access to all apps, rather than requiring each app to solve this complex challenge independently,” said Davis.