Online predators are having a very bad month, after police punctuated an eSafety Commissioner mandate – and the release of a new draft industry code against online child exploitation – with a series of high-profile arrests as a multi-modal crackdown continues.

The rescue of three children and arrest of 45 Western Australians, which came during an AFP and WA Police blitz that coincided with National Child Protection Week, marked the latest in a series of police actions that also included the August arrest of a man from the Hunter Region of NSW, the June arrest of a North Gosford man, and myriad other arrests as authorities crack down on online sharing of child exploitation material.

Continuing online distribution of such material reflects the prevalence of online content that remains all too common: the Australian Centre to Counter Child Exploitation (ACCCE) received over 36,000 reports of child exploitation in fiscal 2021-22 – double the number in 2019 – and the AFP charged 221 offenders with 1746 child exploitation related offences last year.

Twitter, for one, suspended 597,000 accounts during the second half of 2021 for posting child sexual exploitation materials – the largest single reason that accounts were suspended.

Aiming to stem the distribution of such materials in Australia, the eSafety Commissioner last week ramped up its enforcement efforts by issuing formal notices to Apple, Meta/WhatsApp, Microsoft/Skype, Snap and Omegle.

The networks have 28 days to outline how they have implemented policies to address the proliferation of child sexual exploitation material on their services – in line with the government’s new Basic Online Safety Expectations (BOSE) – or face fines of up to $555,000 per day.

Implemented early this year, the BOSE guidelines are based around six key expectations of online content providers including taking “reasonable steps to proactively minimise” harmful and unlawful content; protecting children from content that is not age appropriate; and taking “reasonable steps” to prevent the harmful use of anonymous and encrypted services.

They must also facilitate reporting of such content, outline their terms of service, enforce penalties for people who breach the terms; cooperate with other service providers to clamp down on exploitation material; and respond to eSafety Commissioner requests for information.

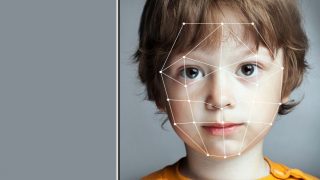

Echoing the real-world arrests happening on an ongoing basis, the crackdown reflects a proactive attack on the distribution of child exploitation material that, eSafety Commissioner Julie Inman Grant said, “is no longer confined to hidden corners of the dark web but is prevalent on the mainstream platforms we and our children use every day.”

Citing a surge in reports of such material since the pandemic began, Inman Grant said it was up to social media firms to apply widely-available tools to help detect and block the content.

“The harm experienced by victim-survivors is perpetuated when platforms and services fail to detect and remove the content,” she said.

“We know there are proven tools available to stop this horrific material being identified and recirculated, but many tech companies publish insufficient information about where or how these tools operate, and too often claim that certain safety measures are not technically feasible.”

Fostering industry cooperation

Recent experience has shown filtering tools need work to be more effective, and the eSafety Commissioner’s formal please-explain notices come as industry groups float drafts of the first phase of eight codes intended to crack down on illegal and harmful online content.

Open for public comment until 2 October, the new draft industry codes and companion Industry Explanatory Paper come a year after industry groups released a position paper to guide development of the codes, and five months after the eSafety Commissioner formally requested the codes be developed.

Once they are ultimately registered and enforceable by the commissioner’s office, the new codes will force a range of service providers – including social media services, electronic services such as messaging and gaming, website providers, internet search engines, app distribution providers, hosting providers, internet service providers, and retailers and others who supply or maintain certain equipment.

The proactive approach in Australia – where the crackdown was already an election platform during the 2019 campaign of former Prime Minister Scott Morrison – is in stark contrast to the experience in countries like the US, where efforts to legislate content policing have met resistance from civil liberties bodies and privacy advocates.

Australian authorities, by contrast, are determined not to water down the provisions.

“Industry must be upfront on the steps they are taking,” Inman Grant said, “so that we can get the full picture of online harms occurring and collectively focus on the real challenges before all of us.

“We all have a responsibility to keep children free from online exploitation and abuse.”