Meta is increasing funding for fact-checkers and working with Australian organisations in a bid to tackle misinformation and abuse on its platforms leading up to the Voice to Parliament referendum.

Later this year, Australian voters will be asked to approve an alteration to the Australian Constitution which would usher in an Aboriginal and Torres Strait Islander "Voice" representing Indigenous Australians to parliament on matters of Indigenous affairs.

In preparation for the 2023 Australian Indigenous Voice referendum, Meta – the parent company of Facebook, Instagram and the newly released Threads – has committed to a "comprehensive strategy" aimed at combating misinformation, voter interference and abuse across its platforms.

In a Monday blog post, the social media giant announced it would provide a one-off funding boost for its independent Australian fact-checkers, deploy its specialised global teams to identify and take action against "threats" to the referendum, and work with misinformation monitoring service RMIT CrossCheck to increase monitoring for "misinformation trends" in coming months.

Meta also said it is sharing best-practice guidance with journalists and other stakeholders on how to combat false information, and is further working alongside Australian government ahead of the referendum.

"We are also coordinating with the Government’s election integrity assurance taskforce and security agencies in the lead up to the referendum,” said Meta's Director of Public Policy for Australia, Mia Garlick.

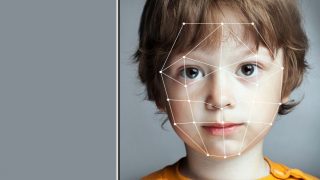

"We’ve also improved our AI so that we can more effectively detect and block fake accounts, which are often behind this activity.

"Meta has been preparing for this year’s Voice to Parliament referendum for a long time, leaning into expertise from previous elections."

Meta's push for factual integrity arrives alongside warnings from social media experts that fake accounts and disinformation could see an uptick during the referendum campaign.

Liberal senators have warned the integrity of the referendum warrants protection from "foreign interference", and that there is a "clear risk" it "could be used as another vehicle to subvert Australia’s democracy."

Meta may also be looking to avoid a repeat of the Cambridge Analytica scandal – which saw alleged "micro-targeting" ad campaigns against US voters on Facebook in 2016 – as the company announced it will maintain mandatory transparency tools for political ads and remove unauthorised ads from Facebook and Instagram.

"With the Voice to Parliament referendum set to take place towards the end of the year, the referendum will be a significant moment for Australia," said Garlick.

"Many Australians will use digital platforms to engage in advocacy, express their views, or participate in democratic debate.

"At Meta, we’re committed to playing our part to safeguard the integrity of the referendum."

Meta prepares for uptick in abuse

Leading into the referendum, Meta will increase its efforts to combat hate speech and provide mental health support to users.

Mental health groups are concerned First Nations Australians could see similar online abuse to what LGBTQIA+ communities went through during Australia's marriage equality postal vote in 2017 – and the eSafety commission has already pointed out an uptick in "racial vilification" of Indigenous people in response to the referendum.

To address this, Meta is partnering with online youth mental health service ReachOut to form a dedicated youth mental health initiative.

"No one should have to experience hate or racial abuse online and we don’t want it on our platforms," said Garlick.

"Feedback we’ve heard is that Aboriginal and Torres Strait Islander Peoples may need additional support, before, during and after the referendum."

Towards the end of July, Meta will also host a "Safety School" training session to bring members of Parliament, not-for-profits and advocacy groups up to speed on its policies, tools, and Facebook's latest moderation tool, Moderation Assist.

These efforts fall in line with an increased push for positivity and safety on Meta platforms

Last week, when Meta launched its latest social media product Threads, Meta CEO Mark Zuckerberg said the new Twitter rival was intended as "an open and friendly public space for public conversation."

Meanwhile, Twitter has experienced a reported surge in online hate, leading Australia's eSafety Commissioner to issue a "please explain" notice before labelling the site a "bin fire".

Meta said it was willing to take further steps as necessary leading into the Voice referendum, and is further allowing Australian users to see fewer political ads in their feeds if they so choose.

"As we get more information regarding the date of the referendum, we’ll stay vigilant to emerging threats and take additional steps if necessary to prevent abuse on our platform while also empowering people in Australia to use their voice by voting," said Garlick.