Facebook has stepped up its efforts to remove deepfake videos from its platform, but a number of loopholes in its new policy will see many manipulated videos remain online.

In a blog post earlier this week, Facebook vice president of global policy management Monika Bickert outlined new rules for when the social media giant will remove manipulated content.

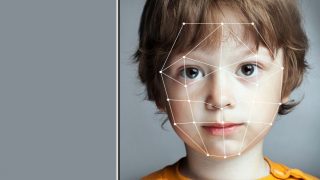

Deepfakes – videos or imagery that has been manipulated using artificial intelligence to show something that isn’t real – have become more common on Facebook and other online platforms, and led to concerns over misinformation.

“Manipulations can be made through simple technology like Photoshop or through sophisticated tools that use artificial intelligence or ‘deep learning’ techniques to create videos that distort reality – usually called deepfakes,” Bickert said.

“While these videos are still rare on the internet, they present a significant challenge for our industry and society as their use increases.”

Facebook will now be removing “misleading manipulated media” if it has been edited or synthesised in “ways that aren’t apparent to an average person and would likely mislead someone into thinking that a subject of the video said words that they didn’t actually say”.

To be removed, the content must also be the product of AI or machine learning that “merges, replaces or superimposes content onto a video, making it appear authentic”.

Manipulated videos and photos that have not been made with AI will be allowed to stay on Facebook.

The new policy has a number of other loopholes.

It will not apply to deepfakes that are made as parody or satire, or videos that have been edited “solely to edit or change the order of words”.

The new rules also won’t override Facebook’s controversial policy of not fact-checking any politicians, meaning that a presidential candidate would be able to post a deepfake video.

These loopholes mean that many of the most prominent existing deepfakes on Facebook would still not be removed.

These include one of Facebook founder Mark Zuckerberg, and a doctored video of US House Speaker Nancy Pelosi edited to make it seem like she’s struggling to speak.

“Facebook wants you to think the problem is video-editing technology, but the real problem is Facebook’s refusal to stop the spread of disinformation,” a spokesperson for Pelosi said.

A spokesperson for US presidential candidate Joe Biden also criticised Facebook’s new policy.

“Facebook’s announcement today is not a policy meant to fix the very real problem of disinformation that is undermining faith in our electoral process, but is instead an illusion of progress,” the spokesperson said.

“Banning deepfakes should be an incredibly low floor in combating disinformation.”

Manipulated videos that do remain on Facebook may be flagged with a “false” tag, Bickert said.

“This approach is critical to our strategy and one we heard specifically from our conversations with experts,” she said.

“If we simply remove all manipulated videos flagged by fact-checkers as false, the videos would still be available elsewhere on the internet or social media ecosystem.

“By leaving them up and labelling them as false, we’re providing people with important information and context.”

Facebook also recently launched the Deep Fake Detection Challenge, offering $US10 million in grants for research and open source tools that can detect deepfakes online.

Facebook has also partnered with news agency Reuters to deliver a free online training course to help newsrooms recognise deepfakes and manipulated pieces of media.

Last year saw a growing prevalence of deepfake content, with the technique even used to scam individuals and businesses out of significant amounts of money.

Apps have also popped up making it easy for anyone to create their own manipulated videos.