Thinking of providing your passport details to verify your identity on sites like LinkedIn? Think twice: a new analysis has found that a simple identity check can pass your personal details through no fewer than 17 different companies – and cyber criminals are watching.

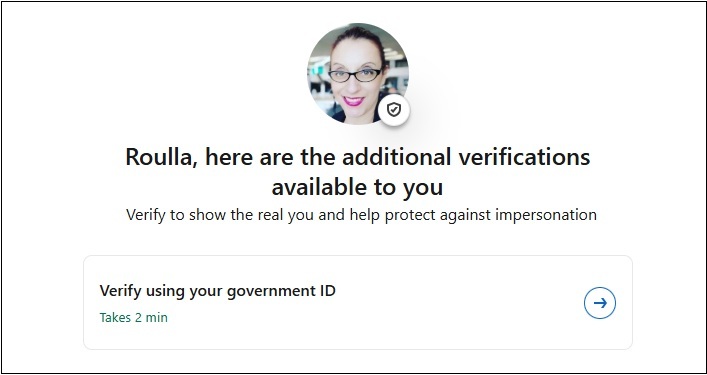

The warning comes from ‘Rogi’, an EU resident who undertook LinkedIn’s verification process to confirm his identity to score the ubiquitous tick – and then, after completing the three-minute verification of his passport details, started to question where the data was going.

“In a sea of fake recruiters, bot accounts, and AI-generated headshots,” he recounts, “it seemed like a smart thing to do… [then] I went and read the privacy policy and terms of service.”

LinkedIn, it turns out, uses identity verification services from US firm Persona, which offers a broad range of services to verify passports, driver licences and other identification documents from over 200 countries and territories.

Persona’s verification, it turns out, collects personal data including full name, passport photo, live selfie, facial geometry, NFC chip data from inside the passport, national ID number, nationality, sex, birthdate, contact details, IP address, geolocation, and more.

This data was checked against government databases, national ID registries, consumer credit agencies, utility companies, mobile network providers, and postal address databases.

“I scanned my passport for a checkmark,” Rogi said, “and they ran a background check.”

Furthermore, he discovered, this data was shared across a network of ‘subprocessors’ including Anthropic, OpenAI, Groqcloud, AWS, Google Cloud Platform, Resistant AI, FingerprintJS, MongoDB, Snowflake, Elasticsearch, Confluent, DBT, Tableau, and others.

Several of these companies – including Snowflake, Elasticsearch, and Tableau – have been the targets of security vulnerabilities or full-fledged data breaches, while others are deeply engaged in data extraction and analysis for profit.

“Your government-issued identity document is being fed through the same companies that build large language models and AI systems,” Rogi said.

“I came for a badge [and] I stayed as training data.”

Mass verification or mass privacy breach?

The fact that none of the companies are based in the EU – and that the transfer of his data was therefore a potential GDPR violation of the type that saw Meta recently fined $2 billion for moving Irish data to the US – further unsettled Rogi.

“You scanned your European passport for a European professional network, and your data went exclusively to North American companies,” he said, adding that “every single company that processes your data is American… the CLOUD Act applies to all 16 of these.”

Personal co-founder and CEO Rick Song pushed back, arguing in a comment on the post that the list of subprocessors is “unfortunately misleading”, that “no personal data processed is used for AI/model training,” and that all biometric personal data is deleted immediately.

Be aware of the risks of handing your government ID for a platform tick. Photo: Supplied

Just eight subprocessors – AWS, Confluent, DBT, ElasticSearch, GCP, MongoDB, Sigma Computing and Snowflake – are used in identity verification, he said, calling the other companies a “superset of subprocessors used across all customers”

Yet LinkedIn does retain personal data, with its terms of service confirming that the company stores the photos from government-issued IDs and that trusted partners “have sole access to [personal] data and it is deleted according to the applicable trusted partner’s privacy policy.”

Furthermore, US, Canadian and Mexican customers are verified using a different third party partner called CLEAR – an entirely separate vector for personal data that has raised its own concerns for many LinkedIn users questioning the company’s terms and services.

“I was tempted to verify my account,” noted Amazon senior user experience designer Allison Boyd, “but now I’m not so sure.”

Feeding the cybercrime machine

The companies involved in the verification chain all take measures to protect personal data, but its sharing exposes that data to a range of different and potentially inconsistent privacy policies and technological security protections that risk their mass compromise.

Just last month, IDMerit – which performs user identity verification for clients in the US, Canada, Australia, Mexico and elsewhere – was named as the source of a massive data leak of 1 billion personal records, including 12 million from Australia.

Cybercriminals are tracking these developments closely and understand that AI can help them exploit LinkedIn to harvest personal data. As a recent project by Trend Micro security experts found, that data can then be easily used to build detailed profiles and target victims more effectively.

In under 24 hours, the Trend Micro team used Claude Code to vibe-code a web application and web scraping tool Apify – which the researchers said can work around LinkedIn’s built-in anti-scraping protections “at scale with high success rates.”

That content, which was based on a base ‘marketing’ website created with AI in less than three minutes, automatically tapped the LinkedIn data to personalise the content of the web site – increasing its appeal to visitors who could easily be tricked into visiting malicious sites.

While the researchers limited their work to publicly accessible information, its implications are painfully clear given revelations about how much data is routinely collected during ID verification.

“A process like this would have cost many man-hours in the past,” the researchers said, but “with today’s AI-powered tools we are able to build this level of automation and AI processing within approximately 24 hours.”

It’s “something that threat actors will be able to do more efficiently,” they added, noting that “the implications for the security of enterprises are significant: the public LinkedIn activity of their employees is now a rich source of intelligence for highly targeted attacks.”