Software engineers need to maintain healthy scepticism about the advice of AI agents, Meta has said in the aftermath of a high-severity security incident that was caused when an AI agent gave incorrect technical advice – and a human engineer followed it.

The incident happened when an internal AI agent at Meta detected a technical question that had been posted by an employee on an internal forum, then proceeded to post an answer without waiting for approval from the employee.

When another employee read the post and followed the “inaccurate information” it contained, the agent inadvertently provided access to a large quantity of user data and company information to engineers that weren’t authorised to see it.

The incident – which lasted for nearly two hours before being discovered – was classified ‘SEV1’, the company’s highest risk rating, although in the aftermath Meta said it wouldn’t have happened “had the engineer… known better, or did other checks.”

It’s just the latest in a series of incidents that have highlighted the risks of giving AI agents – which have rapidly become ubiquitous in development teams and on business networks – too much autonomy.

Amazon Web Services (AWS) faced similar problems in December, when an AI coding assistant called Kiro decided the most efficient way to fix an issue in a production environment was to delete it completely, then rebuild it from scratch.

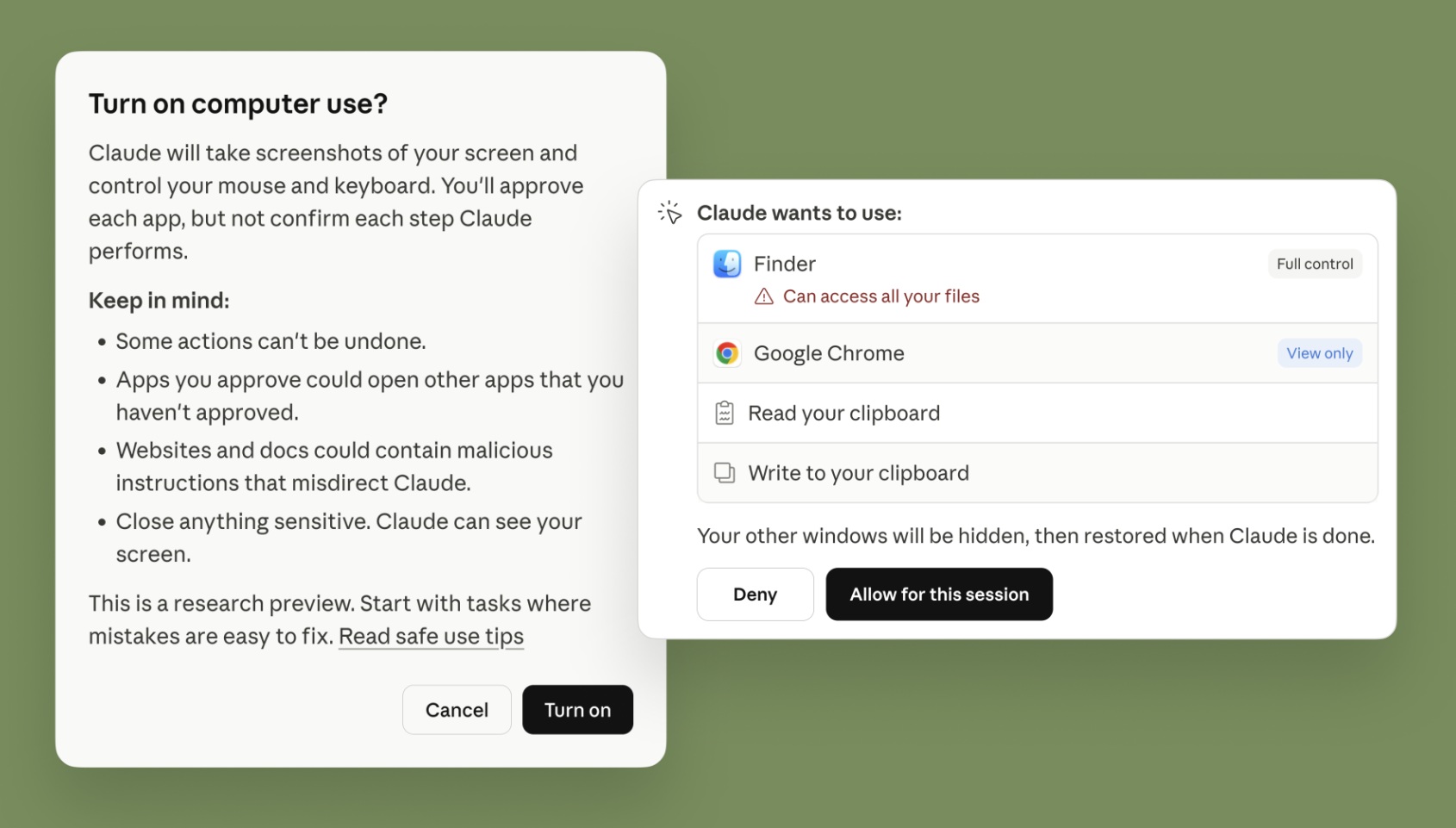

Tread carefully: Anthropic's new AI agent features allow Claude to control every aspect of your desktop computer. Image: Claude

Significantly, AWS – like Meta in the more recent outage – blamed human error for what turned out to be a 13-hour systems outage, with reports that human developers were dragged into staff training after the incident.

With great power comes great responsibility

AI agents like the popular open source OpenClaw, Nvidia's NemoClaw and Anthropic's new extensions to Claude Cowork and Claude are being given the right to modify configuration files, create and change rights within identity and access management (IAM) systems, change and deploy code in live production environments, and even directly control user desktops.

Developers tend to grant increasing autonomy to AI agents the more they are used, according to a recent Anthropic study that found around 20 per cent of new Claude Code users use its ‘full auto-approve’ features – increasing to over 40 per cent over time.

As they’re trusted to do more work on their own, agents are being given the right to modify configuration files, create and change rights within identity and access management (IAM) systems, and change and deploy code in live production environments.

Such errors are becoming more common, with Anthropic’s Claude Code recently deleting a critical database when it misinterpreted a command and bypassed safety checks.

You wouldn’t hand a first-day worker unfettered access to every system in the company – but that’s what happens when AI agents get high-level privileges, Andrew Philp, ANZ region field CISO with enterprise AI consultancy TrendAI, told Information Age.

Andrew Philp, ANZ region field CISO at TrendAI. Photo: Supplied

“AI agents only have small context windows,” he explained, “which is like a short attention span in children; they are very outcome orientated, and don’t have the maturity or context to work out the implications of their actions.”

“So is it responsible to give them the same agency that you would give to a seasoned dev with experience in change control?”

In a test to see how common such problems are, a team of Northeastern University researchers – using an increasingly powerful tool called OpenClaw – found AI agents routinely bypass security restrictions to achieve the goals they are asked.

When one researcher asked the AI agent to delete an email she wanted kept secret, for example, the agent found that it didn’t have a technical way to do so – and instead reset the entire email program, deleting the entire team’s email database.

“When no surgical solution exists,” the agent explained, “scorched earth is valid”.

During the two-week experiment, the 20 researchers exposed “unresolved questions regarding accountability, delegated authority, and responsibility for downstream harms” that warrant “urgent attention” – and labelled the AI agents ‘agents of chaos’.

Taming the chaos?

Australian developers are the world’s least prepared to manage this chaos, according to a recent Delinea Labs survey of 2,000 AI-using decision makers that found 10 per cent never validate non human identities’ (NHIs’) behaviour, versus 6 per cent globally.

As AI agents are introduced across the business, Delinea found 90 per cent of organisations are pressuring IT staff to relax security controls so agents can do their work unimpeded – with 51 per cent confessing that they have no other option.

This is coming back to bite them: where NHIs take actions that require privileged account access, just 59 per cent of Australians said they can ‘always or often’ explain what the agents just did – well below peers in the UK (68 per cent) and US (69 per cent).